Though I carry both my G1 and Diamond around, I consider the G1 to be my primary device. I'm a little disappointed by one aspect of it though. The G1 doesn't fit snugly between my tachometer and speedometer in my car like my Diamond does:

Tile Client Update: New Features and Fixes

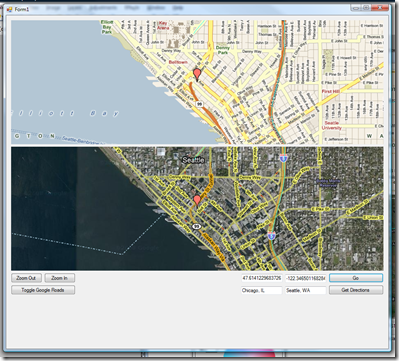

As a result of my work on GL Maps, I made several fixes, feature enhancements, and extensions to the original Tile Client and the sample code. Pictures and captions for your viewing pleasure (download is at very bottom):

I updated the desktop sample to demonstrate how to use a MapOverlay to put pushpins on the map. It also demonstrates how to use CompositeMapSession to blend multiple sessions together. Such as:

- Satellites + Roads

- Streets + Traffic

The desktop sample has also been updated to show how to retrieve and use directions from either Google or Virtual Earth.

The samples also demonstrate how to provide a custom refresh bitmap for when the tile is being downloaded.

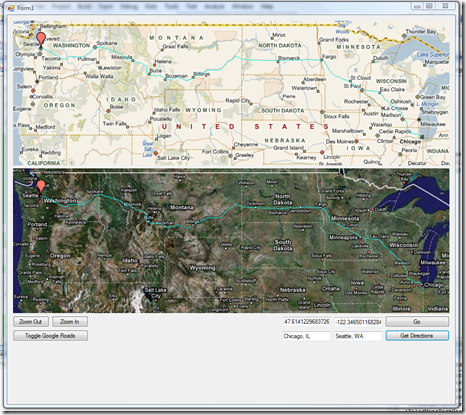

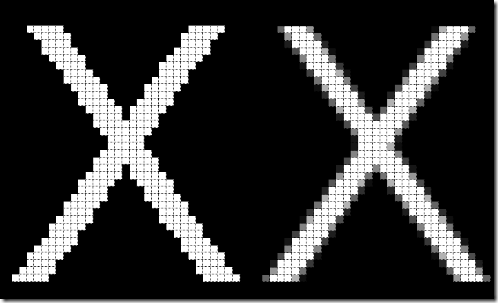

But, refresh bitmaps are tacky! So, the Tile client now uses parent and child tiles (tiles from other zoom levels) to render temporarily if the tile is missing! On the left you see can see the map using parent tiles to render while the tiles are being downloaded. On the right you see the map again, in full detail, when the tiles have completed downloading.

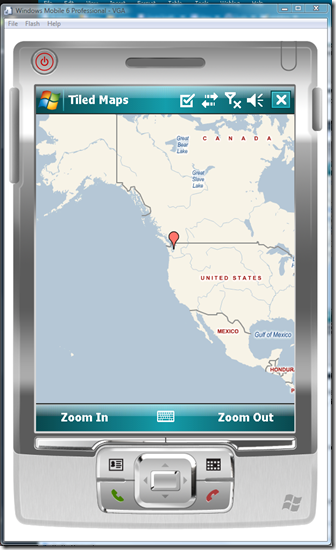

And a much requested feature: Tiles and overlays now support transparencies on Windows Mobile! Above you can see a MapOverlay that is using alpha blending.

Change Log:

- Developers must now instantiate a GraphicsRenderer and use that to render the map, rather than passing a Graphics object directly. This change was made to allow abstraction of the rendering device to support the 3D rendering a la GL Maps.

- Tiles are now cached to disk. They will be refreshed from server every 2 days.

- Fixed Google Directions retrieval.

- Implemented "smart" tile rendering. If a tile is being downloaded, the parent or child tiles are used to render its content.

- Fixed bug where MapOverlays were not being affected by the Offset property.

- Implemented transparencies on Windows Mobile. To retrieve and use a transparent bitmap, simply call GraphicsRenderer.LoadBitmap! See the Windows Mobile sample for more detail.

- Windows Mobile now converts bitmaps to 16bpp automatically after retrieval. This is a huge performance improvement, because instead of doing the pixel format conversion per paint, it happens only once.

- Updated both samples to demonstrate more features.

- Added a TiledMapSession.ClearAgedTiles(N) method to clear the tile cache of tiles that haven't been used in N milliseconds. This is useful for Windows Mobile where memory is a constraint, but clearing all tiles is undesirable.

Manually Installing the Android Eclipse Plugin

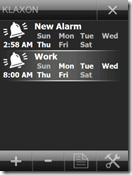

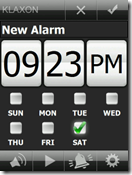

Klaxon is now Open Source

As of late, I've found myself more and more disinterested with Klaxon. This is mostly because I'm working on some really cool projects at both home and at work, on both Windows Mobile and Android (nothing I can talk about yet). But feature requests keep coming in, and I simply don't have time to keep up with them anymore. So after much deliberation, I decided to just open source Klaxon.

If any developer wishes to pick up where I left off, please feel free to contact me. I'm willing to give a would be developer access to my source code repository and my blog to keep this project alive. :)

Developing and Debugging Android Applications on Windows Vista/Server 2008 x64

Google recently released their Windows USB driver for the Android Debug Bridge. This driver allows you to deploy and debug Android applications on a real device. And it's a snap to install and use on a 32 bit Windows operating system. But if you have a Windows x64 OS, you are outta luck. There is no 64 bit driver.

I mulled over a couple options like switching back to a 32 bit OS, dual booting Windows Server 2008 with Linux or Vista. Those are all pretty cruddy solutions, and as I was driving home, an idea dawned on me that was crazy enough to work. VMWare has a feature that allows you to take a USB port on a host machine and delegate it to a client VM. I was hoping that this would work with the Android Debug Bridge. I installed VMWare Player and grabbed an Ubuntu appliance, and voila, it worked!

Basic instructions to do this on your own:

- Get VMWare Player.

- Download Ubuntu from the appliance list on the VMWare website.

- Open the VM and update everything in Ubuntu. (The default user/password on the appliance I downloaded was user/user).

- Follow these instructions to set up Ubuntu to recognize and connect to your phone.

- In Ubuntu, download Eclipse and the Android SDK as per the instructions found on Google.

- In Ubuntu, download the JDK using the "sudo apt-get install sun-java6-jdk" command.

- Set up your Eclipse environment per the instructions found on Google.

- Plug in your phone, and give VMWare Player control of it. For me, the phone was called "High Speed Composite Device" in the list of icons in the lower right corner of VMWare. I now see it listed as "High Removable Disk".

- Follow the instructions found on Developing on Device Hardware to set up debugging on Ubuntu.

That's it! Good luck.

Synchronizing Google and Facebook Contacts

Not being a Windows Mobile phone, the G1 obviously does not have any sort of sync capability with Exchange. There are ways to do it using SyncML (Funambol specifically) to link your Exchange and Google account, but I didn't really want to get into that. I ended up using Outlook to export all my contacts into a Windows CSV file and then imported them into Google.

Around 6 months ago, I discovered a tool called Fonebook which lets you import Facebook Contacts into Outlook- with pictures. Those pictures then go through ActiveSync to the phone. Quite handy. The problem when doing a CSV import into Google is that the pictures aren't included.

So I ended up investigating the Google Data API and Facebook Toolkit, and found that it would be relatively easy to create a sync client. Although that wasn't my top priority at the moment, I just wanted my contact pictures on my phone!

I wrote a quick tool that does a one-way sync from Facebook to Google: if a contact is found on both accounts, the Google account gets updated with the image that was found on the Facebook profile. So here you go!

I have no idea if I'll continue further work on this, since it's not a very interesting project; though I'm sure there would be quite a demand for an Android Facebook application...

Here's the source code for the very basic contact picture sync application. I had to tweak/fix the Facebook Toolkit a bit because there were some bugs in it related to the Politicians and Contact Pictures.

Post 10: Not Just a Windows Mobile Blog Anymore

I just got my T-Mobile G1 this morning. Initial thoughts from a developer's standpoint:

Pros:

- It's not Windows Mobile.

- Richer UI development tools.

- Android Market

Cons:

- It's not Windows Mobile.

- Java. (C# makes Java look like Corky from Life Goes On)

- No MSDN.

I'm going to be switching gears and doing primarily Android development until Microsoft figures out how to ship a real mobile OS.

Looking for someone to design a new skin for Klaxon! (and more bug fixes)

Been really busy as of late with a new project, but not too busy to fix some more bugs in Klaxon.

Version 2.0.0.23

- Removed the upper right default "ok" button from the OpenFileDialog. This prevents users from hitting Ok and closing the dialog when no file has been selected.

- Reverted previous change to "dismiss" Windows alarms. This was causing issues on mine and other devices in that the Klaxon Alarm would never show up. In its place, Klaxon now prevents Windows Alarms from flashing, playing sound, or vibrating. The notification will still show up, but at least not it is completely benign.

- You can no longer hit the Off or Snooze buttons while the phone's screen is powered off.

- Klaxon will no longer start the OpenFileDialog in the Windows directory to prevent the device from hanging while all the files are listed.

- Fixed Daylight Savings Bug!

I'm also looking for someone to design a skin or two for Klaxon, as well as a new icon! Please contact me if you are interested!

Klaxon Polishing

Version 2.0.0.21

- Fixed out of memory crash bug that would happen when browsing a directory filled with many sound files.

- Changed the Klaxon icon to something nicer looking (seen above). This requires restart, Windows Mobile caches icons.

- The OpenFileDialog now shows the appropriate size folder icon for your phone's resolution.

Edit: Whoops, forgot to include the link to the download!

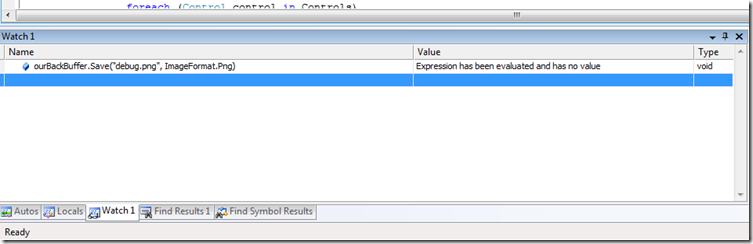

Visual Studio's "Watch" Window

One of the cool and not-so-well-known features of the Visual Studio Watch window is that it not only lets you read and modify values, but also evaluate arbitrary expressions on the system. In one of my current projects, I am doing a significant amount of rendering to a Bitmap prior to blitting it to the screen. If there's a bug in the rendering, it can be quite difficult to track down what the issue is with just a Watch window. It would be a lot more helpful to actually see the contents of the Bitmap as you are rendering it. And you can, by typing in the expression to save the bitmap out, while execution is halted! For example:

After typing that in, you can just go to the directory where the image was saved and inspect it. Very useful trick. If you have any of your own, please share!

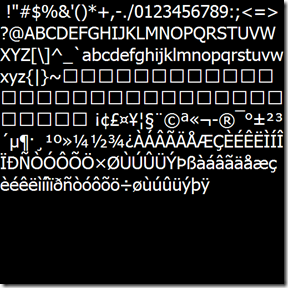

Drawing Text in OpenGL ES

If you're familiar with OpenGL, you know that it does not provide any native font/text support. On the PC, developers have access to wglUseFontBitmaps Windows extension to easily create Bitmap fonts for them; but unfortunately this is not available on Windows Mobile. However, a font is essentially just a texture, so all the tools necessary to draw text are available.

There are traditionally two approaches to drawing text on the screen:

- Using GDI, draw the string to a Bitmap and load it into OpenGL for blitting.

- Pros:

- Easy to do.

- Really fast if your text isn't changing. (2 triangles)

- Cons:

- You need a texture per string, which can be costly.

- The texture objects are basically immutable and not reusable.

- Pros:

- Using GDI, draw every printable character to a Bitmap, and then draw a series of quads that reference the specific texture coordinates you want to draw the corresponding letter. (creating dynamic Bitmap fonts)

- Pros:

- This is very efficient with memory, since any new string is simply just a new set of vertex and texture coordinates that reference a single texture.

- Since the text is simply just a series of quads, you can do per vertex tweaking to do some really cool effects.

- Cons:

- More work on the GPU

- Harder to set up and do "right".

- Pros:

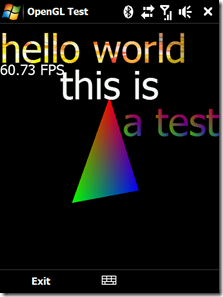

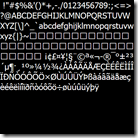

Here's the resulting textures from both methods:

| 1 Texture for a single blittable string | 1 texture that contains all printable characters. Strings are drawn by drawing a series of rects. |

The optimal approach to this problem would be a hybrid solution that would hinge on the nature of the text: static/dynamic, large/small, special effects/vanilla? But in this article, I'm going to describe how to do it the better of the two ways when on a mobile platform: the single texture lookup.

Let's take a look at the sample image again:

This sample is demonstrates a couple of the unique capabilities of the Bitmap font method:

- The FPS and "this is" text is just standard text rendering. Notice that their geometry can overlap and not interfere with each other's rendering. This is because the Bitmap font is actually just a GL_ALPHA texture. The visible portions of a letter have a non zero alpha.

- The "a test" text is per character coloring. The top half is red, while the bottom left is green, and the bottom right is blue.

- The "hello world" text is a multitextured font. The Bitmap font texture was merged with the flame texture on the right to create a cool textured string effect!

So, there are two challenges to creating a proper Bitmap font:

- Determining the width/height of each character in a given font, and generating the resultant texture and character lookup position/dimension.

- Creating vertex array and texture coordinate array from a given string (ie, "hello world").

Text is not as trivial to render as you may think at first glance. Characters are not just a series of identical sized quads that are lined up left to right. Each character has it's own width, and one character's quad can actually overlap into another character. For example, look at these "foos". Notice how the "f" somewhat falls into the area over the first "o".

Adding Google's My Location to your Application

A few people have asked me how I get a GPS fix so fast in GL Maps. I wish I could say I had some secret sauce, but I'd be fibbing. The truth is that I'm using the Google Gears Geolocation API. Google Gears is pretty much the new hotness. The Geolocation API determines your geocode by use of several sources:

- GPS (obviously)

- WiFi access points

- Cell Towers IDs

- Your IP

Generally between the first 3, you can get a pretty accurate location. Unfortunately Google Gears is an ActiveX control that is intended to only be used through JavaScript (it is only accessible through late binding). And though accessing methods through reflection on the PC is feasible, .NET CF does not support late binding with COM. So, that left two possible solutions:

- Create a C++ DLL that handles all the late binding with COM (very gross) and PInvoke into that

- Figure out a way to somehow get the result back from JavaScript (hosted in a WebBrowser control)

The second option is rather tricky though; the WebBrowser class does not give you access to the DOM on Windows Mobile. So after banging my head on that for a while; I figured out a crafty way to do it: through the URI of the browser.

The Google Gears Geolocation sample looks as follows:

function successCallback(p) {

var address = p.gearsAddress.city + ', '

+ p.gearsAddress.region + ', '

+ p.gearsAddress.country + ' ('

+ p.latitude + ', '

+ p.longitude + ')';

clearStatus();

addStatus('Your address is: ' + address);

window.location = "http://deadlink?lat=" + p.latitude + "&lon=" + p.longitude;

}

function errorCallback(err) {

var msg = 'Error retrieving your location: ' + err.message;

setError(msg);

}

try {

var geolocation = google.gears.factory.create('beta.geolocation');

geolocation.watchPosition(successCallback, errorCallback, { enableHighAccuracy: false,

gearsRequestAddress: true

});

} catch (e) {

setError('Error using Geolocation API: ' + e.message);

return;

}

Note the change I made on the 9th line (where I set window.location): once we have received the geocode from the asynchronous call, the JavaScript attempts to change the browser's address to a link containing the latitude and longitude in the query string. The WebBrowser class has a Navigating event that fires whenever the URI is changing. This event also gives you the option to look at the URI being navigated to and optionally cancel it through the WebBrowserNavigatingEventArgs parameter.

So, when the Navigating event fires, I cancel it, and grab the latitude and longitude form the URI:

static readonly Regex myLatRegex = new Regex("lat=(.*?)&");

static readonly Regex myLonRegex = new Regex("lon=(.*)");

void myBrowser_Navigating(object sender, System.Windows.Forms.WebBrowserNavigatingEventArgs e)

{

// if the browser tries to navigate, cancel it, and pick up the lat and long from the uri

// it tried to navigate to

e.Cancel = true;

try

{

string url = e.Url.ToString();

Match latMatch = myLatRegex.Match(url);

Match lonMatch = myLonRegex.Match(url);

if (!latMatch.Success || !lonMatch.Success)

return;

Geocode geo = new Geocode();

geo.Latitude = double.Parse(latMatch.Groups[1].Value);

geo.Longitude = double.Parse(lonMatch.Groups[1].Value);

UpdateGeocode(geo);

}

catch (Exception ex)

{

}

}

Obviously this is a hack, but it is one that will not fail and is easy to maintain as the Google Gears API evolves. And it will work on both the PC or Windows Mobile!

GL Maps API Beta Release

I've been spending the past few days fleshing out the GL Maps API: my goal is to allow any developer to view, modify, or add content into the map. That's the approach Microsoft and Google have taken with their JavaScript/web clients, but neither of those run particularly well on a phone. And they're not 3D and accelerometer savvy either! So, I'm hoping that other bored engineers at XDA-Developers decide to write their own plug-ins and help make this a great application. :)

So, to foster that, I'm open sourcing the "standard" plug-ins I've written so far:

- GPS and Google Gears Geolocation Plug-In: This requires that Google Gears be installed on your phone. Download the CAB from http://dl.google.com/gears/current/gears-wince-opt.cab

- Nav Sensor Plug-in

- Seattle Metro Bus Tracker

GL Maps SDK and source code to the standard plug-ins.

Developers wishing to make their own plug-ins can do so pretty easily.

- Create a new C# Smart Device Class Library Project (.NET CF 3.5). Name it GLMaps.PlugInName. Your assembly/project name must start with "GLMaps." so that GL Maps knows that it is a plug-in assembly.

- Add a reference to GLMapsPlugin.dll (included in the source code download).

- Create a public class and implement the following interface:

using System;

using System.ComponentModel;

using System.Windows.Forms;

namespace GLMaps

{

// Summary:

// IGLMapsPlugin defines the Plugin interface used by the GL Maps application.

public interface IGLMapsPlugin : IDisposable

{

// Summary:

// MapObjects contains a list of objects that are to be shown on the map.

BindingList<IGLMapsObject> MapObjects { get; }

//

// Summary:

// Return the Plugin's MenuItem that should be added to the GL Maps application

// menu. If null, this plugin will not have a MenuItem.

MenuItem MenuItem { get; }

//

// Summary:

// Name of the IGLMapsPlugin as it is seen in the list of Plugins in GL Maps.

string Name { get; }

//

// Summary:

// TimerInterval is the interval in milliseconds at which the OnTimer interval

// is called.

int TimerInterval { get; }

// Summary:

// Initialize is the first method called by the IGLMapsPluginHost. When called,

// the plugin should do all it's necessary one time setup.

//

// Parameters:

// host:

void Initialize(IGLMapsPluginHost host);

//

// Summary:

// OnTimer is called by the Plugin host every TimerInterval. If the Plugin needs

// to update data on a heartbeat, it should be done in this method. OnTimer

// is called on the UI thread, so any operations that may take a long period

// of time should take place on a separate thread so as not to hang the UI.

void OnTimer();

}

}

Simple plug-ins should only take 10~20 lines of code!

Note:

This API will change and evolve in the next few weeks as I fill any holes or add new features. Any breaking changes will require a recompilation of existing plug-ins.

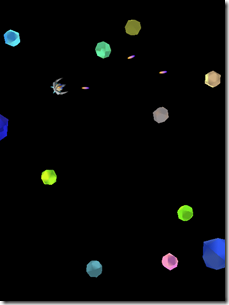

GL Maps - Your World is 3D and Your Maps should be Too

So what happens, when you take the Sensor API, Managed OpenGL ES, a Tiled Map Client, and Seattle Metro Bus Web Service and mash it all into a single application? An accelerometer controlled 3D map viewer with real time bus tracking of course. The level of awesomeness of this application is difficult to explain unless you have an HTC Touch Diamond to play with. I'll release a video shortly.

In addition, I've updated the Tiled Map Client code significantly to support multiple rendering engines (currently GDI and OpenGL ES). There's a minor breaking change for existing users, but quite a few new features have been added. I'll release that code after I finish bug fixing.

Here's the CAB file for GL Maps for those interested Diamond users.

Usage:

- You can pan using your finger obviously.

- Use the Nav wheel to zoom in and out.

Upcoming Features:

- Fully 3D terrain

- Directions

- Search

- Map Overlays such as Satellite, Terrain, and Traffic

- "Over the Shoulder" mode for when you are driving, the camera will be behind your car and following you

Note:

The Red Bubble that you see in Seattle is where I live. I will be changing that in a future location to be the user's approximate location.

Silverlight 2.0 Too Heavy for Windows Mobile - Pun Intended

I managed to get my hands on a an internal copy of Silverlight 2.0 for Windows Mobile and have been playing around with it. It makes me a sad panda. It's so unbelievably slow. It might be because it it's a Debug build. It might be because it doesn't support hardware graphics acceleration. Or it might be because they tried to fit the desktop architecture onto a mobile phone. Take your pick, you guess is as good as mine. But Silverlight application development on Windows Mobile does not look promising.

Sensory Overload for the Samsung Omnia

After fixing up the OpenGL ES wrapper to work with Vincent 3D, porting this game to the Omnia was simply a recompile!

Since the Omnia does not have a "Nav" wheel, click the center button to shoot a bullet, and "swipe" it to use the special spray attack. Just a reminder this isn't meant to be a full featured game, just a demo on how to write 3D games. :)

Managed OpenGL ES and the Vincent 3D Implementation

A few months ago, I wrote a managed wrapper for OpenGL ES. This wrapper worked great on the HTC Touch Diamond, but I never really tested it out on the Vincent 3D OpenGL ES software implementation. This was partly due to my misconception that Vincent 3D was a Common-Lite implementation of OpenGL ES (which would mean it only supports fixed point). As of recently, I've been working with OpenGL ES on a regular basis, and realized that Vincent 3D was in fact a Common implementation (it supports floating point as well as fixed point)!

What this means that the managed wrapper should in fact be fully functional on top of it. However, there was a bug in the wrapper (or maybe Vincent 3D) that prevented it from working properly. So after I addressed it, the wrapper is working fine, for the most part: there seems to be some floating point precision issues with Vincent 3D. I am guessing those may be due to some sort of fixed point conversion taking place in the pipeline, but I am unsure.

Anyhow, as you can see from the screenshots above, my triangle sample and Sensory Overload are both working on an emulator! Albeit, it is quite slow, and the emulator doesn't have a G-Sensor that allows you to control the ship. :)

Here's the updated Managed OpenGL ES source code.

More Klaxon Cleanup in 2.0.0.19

Changes in the latest version:

- When integrating with Home Screen, Klaxon now cancels all Windows alarms upon firing an alarm.

- Turning off the screen when snoozing is now an option in settings.

- Klaxon will now ask for confirmation if you try to create or edit an alarm that is set as disabled. Some users were accidentally disabling the alarm and pressing OK, and the alarm would obviously not go off.

Klaxon - Accelerometer/G-Sensor Enabled Alarm Clock now supports the Samsung Omnia!

Samsung Omnia users will be pleased to hear that Klaxon now supports their accelerometer. That's the only change in 2.0.0.18. Click here for the Klaxon CAB setup file.

Using Samsung Omnia's Accelerometer/G-Sensor from Managed Code

Many months ago, I wrote an API to access the HTC Touch Diamond's Accelerometer from managed code. Those who investigated the code a bit deeper probably noticed that I had stubbed out an interface, IGSensor. I did that in anticipation that many Windows Mobile devices in the coming months would include an accelerometer. It turns out I was right. The idea behind the IGSensor interface was to provide a generic unified API that a developer could use to access the accelerometer of any device without having to worry about which device it was.

Anyhow, I'd gotten several requests to investigate the Samsung API, if there was one. Not an Omnia owner myself, I wasn't particularly motivated. Then yesterday, one of the requestors informed me that Spelomaniac from modaco.com had figured out how to access the Samsung Omnia's accelerometer.

We happened to have an Omnia at my workplace, Kiha, so I grabbed that, and ported the code so it was supported by my API. So yeah, it's all working!

I highly recommend that all developers to download the updated API and make the minor changes necessary so that Omnia users can make use of the HTC Touch Diamond accelerometer applications! Here are the new breaking changes in the API:

- HTCGSensor is now a private class.

- SamsungGSensor is a new private class.

- Instead of creating a HTCGSensor or SamsungGSensor explicitly, developers should use the new GSensorFactory.CreateGSensor method to get the appropriate IGSensor object for the phone the application is running on.

- IGSensor is now IDisposable. Developers should Dispose of the object once they are finished using it. Not doing so may leave resources or a thread hanging and the application may fail to exit.

That's it! The code changes you need to make should only take you a few minutes.

Click here to download the new Windows Mobile Unified Sensor API.

I'll be making the changes necessary to Klaxon this weekend so it runs on the Omnia. :)

Here is the original C++ code I for the HTC GSensor emulator in case you are interested. I would not recommend using the HTC Accelerometer emulator, because it does not support Orientation Change notifications through the Windows registry.

by Koush at 12:58 AM 20 comments

Labels: Accelerometer, Code, Development, G Sensor, Omnia, Samsung, Sensor API, Windows Mobile

Regarding Licensing for the Source Code found on this site

I've gotten asked about licensing the code on this site a few times. Just to clarify, it's a Creative Commons License, so it's all free. Commercial, non-commercial, whatever. All I ask is that you give credit where credit is due (link my site somewhere in your application or website :). They're mostly just projects I get bored/tired of, and figure someone else may take interest. If you have questions regarding the code, feel free to contact me on AIM at koushd. I'm also open to hosting it on Codeplex if that floats your boat; just give me a good reason and I will!

P.S. GPL is lame.

P.P.S. Yeah, I went there.

Klaxon Changes in 2.0.0.17

Adding skinning support introduced a lot of bugs, but I'm pretty confident I worked most of them all out now. I'll be releasing new sample skins for Klaxon soon.

The changes in 2.0.0.17 include:

Download and extract the new TestSkin into \Program Files\Klaxon\TestSkin to create your own skins.

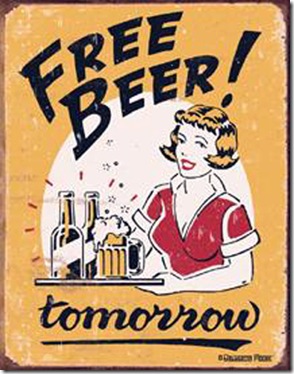

Post 9, The Rant Continues: TabIndex is Terrible. Windows Mobile needs "Smart" Key Navigation.

Yeah, so it's been well over 30 Days as I originally predicted, so I'm renaming this series 30 Posts of Bitching about .NET Compact Framework.

Windows Mobile "Standard" [0] device development is a pain. I've never owned one, because I can't really live without a touch screen.

I still fondly remember many years ago, when I first fired up the Visual Studio Form designer with the Smartphone form factor. I started started dragging my controls out, doing the usual, then I noticed something odd: I didn't have a Button control on my toolbox. I searched around for a bit, and assumed that my Visual Studio installation was hosed. I created a new Pocket PC project, and the Button control was there. So I dragged one out, and then changed the target platform to Smartphone. I reopened the form and saw this intimidating hazard sign:

Attempting to run the application resulted in a NotSupported exception. Apparently simple Button controls aren't supported on Windows Mobile Standard for whatever reason. I guess this is because there is no touch screen, and thus no mouse clicks, so they just dropped support for the Button control and left the developer with LinkLabels. That really brings application development to a grinding halt if you are trying to target both Smartphone and Pocket PC. [1]

Man, I've really strayed from the point of this article. Getting back on track: The purpose of this post is to discuss something I mentioned briefly in a previous post. One of the annoying issues associated with Smartphone development is that since you are restricted to only keyboard input, your application has to be very aware of key navigation. The user should be able to intuitively navigate through all the forms quickly and easily. However, from an API level, all the developer is given is TabIndex. TabIndex is a property that you can set on any control that let the Windows API know the tab order of controls. For example, the Up button on a Smartphone would take you back to the control with the previous TabIndex, while the Down button would take you to the next TabIndex.

All is well in the world of TabIndex and simple 5 minute applications. TabIndex works great if all your controls are laid out vertically, with one control per line. But if you need to do any real Windows Mobile application development, chances are that TabIndex will not cut it. Take for example the following Form:

For visual reference, the yellow region is a panel housing 4 LinkLabels, and the white region is the Form housing the other 6. The TabIndex of each Link is shown.

Navigating through this form is gross. For one, the Left and Right keys don't actually navigate left or right to other Controls. And the Up and Down Keys simply go through the Controls in TabIndex order. So, if "Panel TabIndex 0" had focus, pressing up would take you to "Form TabIndex 5" and not "Form TabIndex 2". Conversely, if "Form TabIndex 2" had focus, pressing down would take you to "Form TabIndex 3" and not "Panel TabIndex 0".

Basically, once you introduce any sort of grid or panels, TabIndex probably will not work for you. At that point, you need to hook the KeyDown events and handle the navigation to and from each Control... manually... for every Form. And as development progesses, and the UI changes, you better make sure that you update all those nasty KeyDown event hooks so your navigation doesn't break!

This is not ideal; it introduces code bloat, bugs, and is in general just tedious to do. To that end, I wrote an algorithm that would analyze the Controls in a Form and figure out the best Navigation target from any given Control (if there was one).

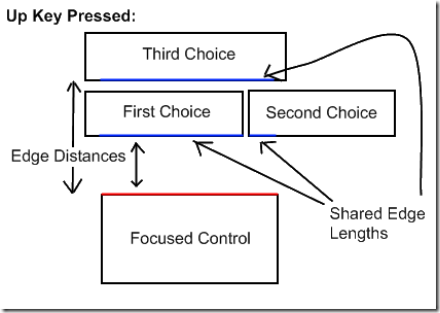

I'm not going to really get into the algorithm in great detail. Basically the way it works is that it looks at the key that was pressed and finds the corresponding edge of the Control. It uses that edge to find the opposite edge of all the other controls that has the best "score". A score is comprised of 2 factors: how close are the edges to each other and how much of the edge is "shared". Yeah, that probably made no sense. Maybe this picture will help:

So, yeah, Edge Distance and Shared Edge Length are the two weighting factors in determining the best navigation target.

This navigation code is actually a small part of the overall UI framework I use. I've stripped out the algorithm and repackaged it so that it can be used by anyone. Usage is pretty straightforward. The Navigation class has two members:

- FindFocusedControl - Find the control on the Form that is currently focused.

- GetBestNavigationTarget - Given a Control and a navigation Key (Up, Down, Left, or Right), find the best navigation target.

To catch navigation events easily, you can do it at a form level by enabling KeyPreview. Then override OnKeyDown and use those two methods to find the Control that should receive focus. For example, here's the code from the sample:

using System;

using System.Windows.Forms;

namespace KeyNavigationDemo

{

public partial class NavigationDemoForm : Form

{

public NavigationDemoForm()

{

InitializeComponent();

// By enabling key preview, the form receives all key events

// that would otherwise be received and processed by a control.

// We can choose to handle these events.

KeyPreview = true;

}

private void myExitMenuItem_Click(object sender, EventArgs e)

{

Close();

}

private void mySmartNavigationMenuItem_Click(object sender, EventArgs e)

{

mySmartNavigationMenuItem.Checked = !mySmartNavigationMenuItem.Checked;

}

protected override void OnKeyDown(KeyEventArgs e)

{

if (mySmartNavigationMenuItem.Checked)

{

switch (e.KeyCode)

{

case Keys.Up:

case Keys.Down:

case Keys.Right:

case Keys.Left:

{

Control focused = Navigation.FindFocusedControl(this);

if (focused != null)

{

Control navigateTarget = Navigation.GetBestNavigationTarget(focused, e.KeyCode);

e.Handled = true;

if (navigateTarget != null)

navigateTarget.Focus();

}

}

break;

}

}

base.OnKeyDown(e);

}

}

}

Here's the full source code to the navigation algorithm and the sample demonstrating how to use it. The sample application is the same one you see in the above image with 10 LinkLabels. By default, Smart Navigation is disabled, use the arrow keys to navigate around the form using TabIndex. Then enable Smart Navigation in the Menu and use the arrow keys to navigate intuitively.

The sample is just a demonstration of the navigation; keep in mind that some Controls may want to handle certain key presses. For example, text boxes should generally receive the left and right key presses to navigate within the entered text and not to another Control.

[0] "Standard" is the official Microsoft term for a Windows Mobile non touch screen device. Professional is the term for touch screen devices. It used to go by "Smartphone" and "Pocket PC" back in 2003, and then they changed it to Standard and Professional, so that's how it is now. I think. Don't quote me. In fact, forget you ever read this.

[1] I should mention, there is nothing to stop a developer from basically inheriting from Control and overriding OnClick and thus creating a Button control. This works just fine, and it is just benign code on a Smartphone; behind the scenes those mouse methods are just a result of receiving WM_LBUTTONDOWN/UP messages. So, I ended up creating my own ButtonBase class in the UI framework I wrote/use.

by Koush at 12:34 AM 1 comments

Labels: 30 Days of Bitching about .NET CF, Code, Development, Windows Mobile